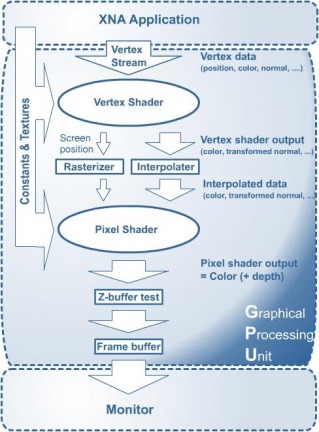

The “rendering pipeline”, as it is called, comprises of all the stages starting from the input data to your graphics card, and displaying the final output on the screen. Observe the following diagram of the pipeline:

The vertex shader is the component that receives the initial vertex stream being passed to the GPU. This does the initial processing on the raw stream, and determines what the user wants drawn by the many vertices. For example, using 6 vertices, you could draw a hexagon, 2 triangles, or anything else. The “what to draw” information is encoded in something that is passed to the GPU even before the vertex stream. This is called the Vertex Declaration, and it tells the graphics card something like, “OK, you’re going to be receiving a stream of vertices, and you have to draw at most 3 triangles from it.” The vertex shader then passes the declaration and the required vertices to two things: the Rasterizer, and the Interpolator, which filter and process the data, and pass on the required information to the Pixel Shader.

Both the Rasterizer and the Interpolator pass their data on to the most fun part of the GPU, the Pixel Shader. It is the job of the pixel shader to determine the color of every pixel on screen, nothing more nothing less. First, the data from the Rasterizer acts like a sieve. The rasterizer tells us exactly which pixels we need to be concerned with, and so we throw away the rest. The data from the interpolator is then used to determine the color of individual pixels on screen, but because the pixel shader is now programmable, this may not always be the case. The pixel shader is like a programmable function, which leaves it’s effects as creative as the programmer. I recently tweaked the pixel shader to make all the graphics appear in sepia mode. The pixel shader acts like a final filter between your data and what is displayed on screen, and a lot of cool effects can be implemented.

Now someone might ask, “Why don’t we filter the colors before passing them to the GPU? Wouldn’t that be simpler?” The answer is this: The GPU is so much better at it than the CPU. Today, most high-end graphics cards have dedicated hardware, RAM and processor. This hardware is meant for rendering purposes, so why not use it? The CPU has a dozen other things it needs to do, like run the physics engine, check for collision, process input, gather network messages, and much more. Taking advantage of the parallelism between your CPU and GPU can go a long way to boost performance, especially in a situation where you’re forced to code in straight C (nightmare) just so your code runs faster.

Anyways, just a heads-up for all Graphics and Vision enthusiasts. This is all very cool stuff, but it’s slowly becoming more and more subtle and complex for the much-required performance boosts. Anyway, until next time!

Asadullah

RSS Feed

RSS Feed